The Hard Part About Production ML Isn’t Getting Started...

Part 1/n on data quality for production ML, inspired by Chad & mark's new O'Reilly book on data contracts.

Hey friends! It’s been a while since I last posted but life happened.

For one thing, I recently spent 3 weeks in India 🇮🇳 getting engaged 💍! (Feel free to check out my IG for some pics from the trip!)

6 months (ish?) ago I also took on a new role as Head of AI Developer Relations at Labelbox, where data quality is something I think about quite a bit, especially constructing high-quality datasets for machine learning teams (including teams working on Generative AI use cases).

I feel incredibly fortunate to have gotten a sneak-peak of Chad Sanderson & Mark Freeman ‘s new book they recently announced on the Data Products substack.

I first started organizing my thoughts on data quality in the Gen-AI world with “Why Data Quality Is More Important Than Ever in an AI-Driven World”.

Specifically I concluded with:

“Data quality can have, and has had, detrimental impacts on machine learning projects with measurable negative societal and individual impacts.

Advances in Generative AI are highlighting the increasing and aggressive demand, as well as potential shortage, of high-quality data.

There are specific ways that data quality impacts the machine learning product development cycle, including the ongoing maintenance costs of ML pipelines.”

In the next two posts, I want to talk more about how:

Understanding the needs of your ML system is important in determining the trade-offs of data quality and different points of the ML lifecycle.

The impact of data drift can be significant on your system and understanding the types of drift along with the strategies for monitoring and addressing them will help.

Continuous monitoring, adaptation, and a data-centric approach in MLOps are crucial to maintaining a competitive edge for any team delivering ML products (whether they be “traditional” deep-learning, computer vision or NLP systems or the new Gen-AI kids on the block).

After all, the hard part about production ML isn’t setting it up,…

… It’s Keeping It Going: Chasing Operation Vacation

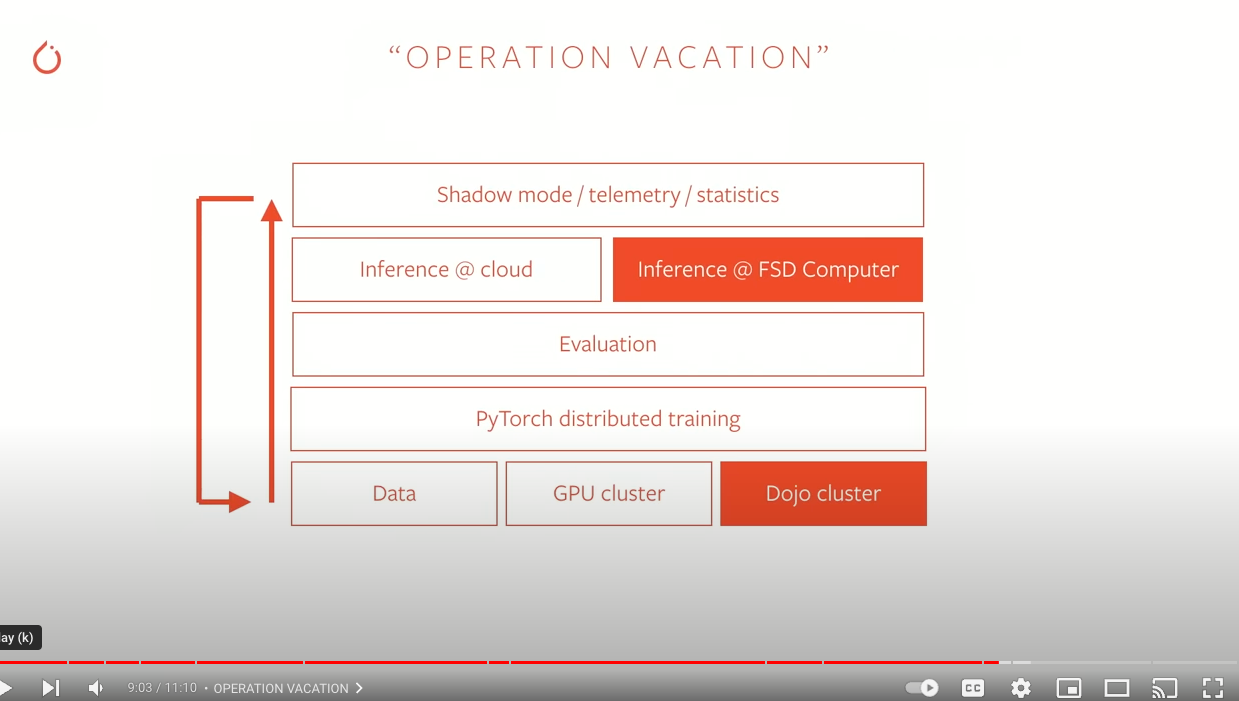

Karpathy has coined many “Karpathyisms” during his stints at OpenAI, Tesla, and Stanford. One that took hold of the minds of MLOps and product ML practitioners was the phrase “Operation Vacation”.

What is “Operation Vacation” mode and why is it so hard to achieve?

CI/CD for Models & Data for Hand’s-Off Kaizen

While helping to develop and scale the machine learning efforts and platform behind Tesla’s self-driving capabilities (like AutoPilot and Smart Summon), Karpathy gave a talk about scaling PyTorch. The main goal for the “data engine” was to ensure that the models powering the vehicle’s self-driving capabilities, including AutoPilot and Smart Summon, continued improving as additional data was collected and labeled.

As data came in from the various sensors deployed throughout each Tesla vehicle (across the entire fleet), the data would be processed, go through some combination of human and programmatic labeling and evaluation, eventually being used to train and evaluate models. Improvements would be released and deployed, with inference performed in real-time (or near real-time), and evaluated just as quickly as data came back in from the field.

Rinse and repeat.

This sounds delightful – so why are most companies not in a position to accomplish anything close to “Operation Vacation”, complete with pina coladas and Hawaiian shirts?

The Requirements: Automation, Timing, and Proactive Detection

There were three key requirements met by Tesla’s data engine that ensured its success in scaling out their SOTA machine learning efforts.

Right Data, Right Time

Automated & Triggered Model Retraining

Proactive Data Drift Detection

1. Right Data, Right Time

Some figures and insights that help us understand the volume, velocity, and variety of data that Tesla’s data and ML engine needs to crunch through include:

“The total fleet with Autopilot Hardware 2 and above currently stands at around 650,000.”

In the 2019 talk, additional data points Karpathy provided:

Autopilot had accumulated: 1 Billion miles, 200k lane changes, 50+ countries

Smart Summon: 500K sessions (of people calling their cars to them)

Various data modalities leveraged (such as video, image, LiDar, geospatial, and software telemetry).

When Autopilot is engaged, actions need to be taken in real-time (i.e. real-time inference).

Data needs to be processed as soon as it comes in (which also implies models need to be edge friendly, meeting limited computation and memory requirements).

Additionally when models are trained offline (whether batch or mini-batch) the errors need to be evaluated and understood before the model is deployed.

What edge cases need to be labeled?

Is there additional data that needs to be collected at 3am on a winter day in Arizona?

What about covered stop signs?

While there are many different ways to combine data collection, processing, featurization, model training, deployment and inference, each use case will have specific needs that dictate the predominant patterns that should be used.

For example, the volume of data (based on the figures above) required a level of sophistication in terms of infrastructure (or at least at the time it did).

If all the data that came in had to be hand-annotated and labeled, an immediate bottleneck would occur with the Machine Learning Engineers unable to access the data needed in order to train their models when they needed them.

(Note: There are now MUCH easier ways to accomplish this task for human and model-assisted labeling *coughLabelboxcough*)

Data would need to be sampled so that humans would only be needed to identify edge cases, or situations where the model prediction didn’t match the human action taken or the confidence of the prediction was low.

And in order to select scenes that caused confusion, metadata about the scene would need to be provided in order to understand what factors contributed to the model’s low confidence score for that specific prediction.

2. Automated Retraining

The first challenge of production machine learning is to go from 0→1 i.e. to get your first model trained and deployed (not as a notebook).

The next challenge is ensuring a continuous cycle of model improvement. Model retraining is intimately tied with data operations because data needs to be collected about how the model performed as well as new data the model hadn’t seen.

The hidden secret about high-performing machine learning organizations is the data flywheels that power their innovation, usually first party application data. Data that can’t be easily found on the web and that’s generated through user interactions with the company’s own product and services.

In “Operation Vacation” mode, as soon as performance dropped or model predictions deviated from expected behavior, the data process and model retraining cycle should initiate. A new model would be trained off the new data that had been collected in the meantime, tested, and if it passed an established performance threshold should be deployed.

Keep your eyes open for Part 2 of this short mini-series on data quality, where I also start covering the impact of drift on model performance.