Managing Drift & Data Quality

Part 3/n of a high-level intro to why all models (even gen-AI) will change & how to start responding on the data level

Hey folks!

Thanks for sticking with during this *super* high-level mini-series introducing data drift, data change management, and why data engines are important.

We’ve barely scratched the surface on these topics, especially as patterns and tooling keep changing.

Like my friends Joe Reis & Chad Sanderson talk about however, “the more things change, the more they stay the same”.

In future blog posts I’ll start building on some of the principles I’ve been exploring in this series, specifically the fact that:

I said what I said.

Data Change Management Strategies in ML

The Complications of Managing Data Changes at the Individual Model Level (For All Models)

Re-Training Isn’t Free

For toy models and small datasets, retraining is practically free.

The beauty of the commoditization of computer and storage (especially serverless) and the plethora of options for both distributed and local data preparation and training frameworks means a solopreneur can tweak and fiddle around with their model and data endlessly for less than the price of an artisanal burger (roasted brussel sprouts and sweet potato fries not included).

This is absolutely not the case for big data and big teams, where data quality issues have really scaled up because of sheer size and complexity.

At a relatively recent email company I worked at, for example, we had pipelines that easily cost up-to 6 figures for compute and storage. The unofficial advice was if the cost of the pipeline job was less than 6 figures, it didn’t need VP sign-off. This is an extreme example of a different end of the spectrum, of an enterprise company that had an all-you-can-eat deal with a major cloud company committed to a certain level of spend.

Removing Features Requires Pinpoint Precision and Instrumentation

The challenge of trying to isolate specific features potentially resulting in drift is made painfully acute by the curse of dimensionality.

For example, in a 20 feature model, using a statistical approach would require collecting time-variant measure per class, feature, and additional metadata as needed, potentially resulting in some n^20 types of analyses and measures.

Monitoring & Alerts Aren’t Free Either

And when all is said and done, the answer to data drift isn’t always “pile on more monitoring”.

To be clear, there is a place for alerts and monitoring.

Monitoring while lagging, provides the baseline for understanding historic performance.

Alerting (when done well) is focused a bit more upstream, used to detect problems that need to be addressed immediately.

“In theory, theory and practice are the same. In practice, they are not.” - Unknown

Why Throwing Monitoring At ML Pipelines Doesn’t Fix Drift

What actually happens on the way to a *perfectly” instrumented portfolio of ML models?

More Components, More Problems

Complexity scales – Drastically, in the case of ML pipelines.

The number of components to monitor rapidly increase because of:

Increase in specialized hardware configurations – Ex: GPUs, TPUs, etc

Complex compliance requirements that result in multiple artifacts and checkpoints to be collected, versioned

Interwoven dependency graphs – A single data point can travel through a Mario-world like maze of tools, containers, and transformations.

The journey of data through a data or ML platform isn’t a simple, unidirectional sequence of data => model => code.

It’s more akin to a supply chain of dominoes, where a single upstream change in population distribution, data properties, etc can cause massive downstream impacts including impacts on throughput and latency of the service, crashes, unexpected predictions, and both “silent” and “loud” failures.

Alert Fatigue Is Real

The Slack channel that cries “wolf” is quickly muted. Suddenly, having detailed telemetry no longer seems…attractive.

The point of setting up alerts is so that the right owners can quickly take action and either patch, rollback, or address the cause of the alert.

Yet alerts tend to be haphazardly setup, missing important information like:

What was supposed to happen – i.e. expected behaviors, target metrics

What did happen – i.e. level of deviation, possible upstream cause

What needs to happen as a result – i.e. what action(s) need to be taken by who and when.

Some alerts won’t even have the most important information readily legible like what pipeline was impacted at what stage.

To Conclude: ML Quality Mostly Data Quality

Why talk so much about data drift in a blog series about data quality in a Substack about production ML and MLOps?

Data Quality Issues Can Cause Drift or Look Like Drift

They aren’t, by definition, the same. To be clear, drift can be a perfectly normal phenomenon. Model drift will happen even when the data is high-quality because change is a part of everyday life.

But upstream changes by data producers can also cause model performance to drift and are sometimes indistinguishable from drift, especially if changes in application code influence user behavior in the app.

This is a big reason why retraining and feature tinkery aren’t the solution to all cases of supposed drift.

Change Management Needs To Be Supported By Data Quality Infrastructure

Monitoring and managing ML quality is largely managing data quality.

And there is no scenario where even a well-architected platform can catch every problem in time or where the accountability for quality can rest on the shoulders of a single SWE or SRE.

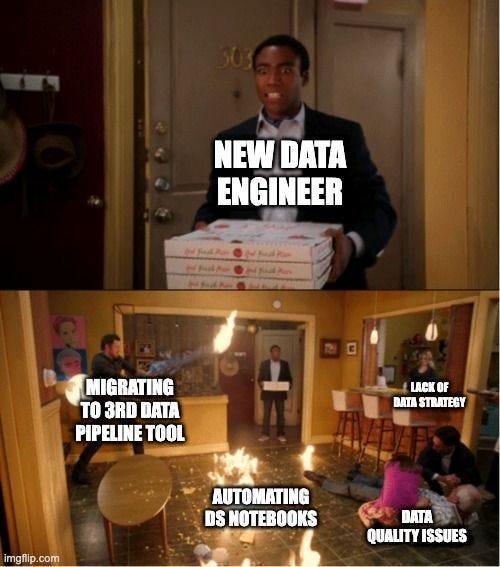

At large companies, a Site Reliability Engineer (a term coined and popularized to describe a very specific type of “fixer” engineer) may have to sit on hundreds of pipelines, putting out multiple fires without any knowledge of what the pipelines are doing.

Data scientists and ML Engineers might have the domain knowledge of the project but lack the ability (or even access to prod) to solve problems as they arise. Especially in organizations where a separate set of SWEs are tasked with translating Jupyter notebooks into production grade pipelines, there is a fragmentation of ownership that results in no one person *successfully* saving the day without significant cost.

As Chad Sanderson and On the Mark Data talk about in their book, for an org to scale change management, it’s data quality infrastructure needs to help by:

Codifying domain knowledge of business stakeholders with respect to valuable data use cases and disseminating such knowledge across the organization.

Creating meaningful constraints on the data systems to uphold data standards to the expected domain knowledge.

Identifying when data drift occurs, what type of drift occurs, and its impact on the business.

Alerting key stakeholders when drift impacts an agreed-upon threshold to data quality.

Rectifying data quality or updating the business logic to better match real-world truth after data drift.

Conclusion

So to finish off the mini-series, a recap:

There are specific ways that data quality impacts the machine learning product development cycle, including the ongoing maintenance costs of ML pipelines.

Understanding the needs of your ML system are important in determining the trade-offs of data quality and different points of the ML lifecycle.

The impact of data drift can be significant on your system and understanding the types of drift along with the strategies for monitoring and addressing them will help.

Topics you’d like me to cover in-depth? Tutorials & implementations that would be helpful? Feel free to comment below 👇 or even to reach out to me on Twitter or LinkedIn.